Databricks and Azure Synapse: What to Choose, Considering Cost, Speed, and AI Readiness

Databricks or Azure Synapse: what's best platform for advanced analytics and AI and which one fits your company best - examined, expanded, and explained by our senior engineers.

Subu

Apr 30, 2026 |

7 mins

When enterprises need to handle growing volumes of data while preparing for AI initiatives, two platforms consistently dominate the conversation. Databricks is a lakehouse-centric analytics and AI platform built on optimized Apache Spark, designed for big data processing, machine learning, and real-time pipelines.

Meanwhile, Azure Synapse is Microsoft's unified analytics service that combines SQL data warehousing, Spark pools, data integration, and BI tools like Power BI for structured analytics and enterprise reporting.

Data warehousing choice matters because it's typically made by data engineers, platform architects, analytics leaders, and CTOs who understand that the decision extends far beyond feature comparisons. Your platform choice shapes three critical areas: architecture decisions (multi-cloud flexibility versus Azure ecosystem integration), team skill requirements (SQL-focused analysts versus Spark and ML engineers), and cost models (variable autoscaling compute versus predictable query-based pricing). Getting this wrong increases complexity, inflates cloud spend, and delays time to value for AI and analytics initiatives.

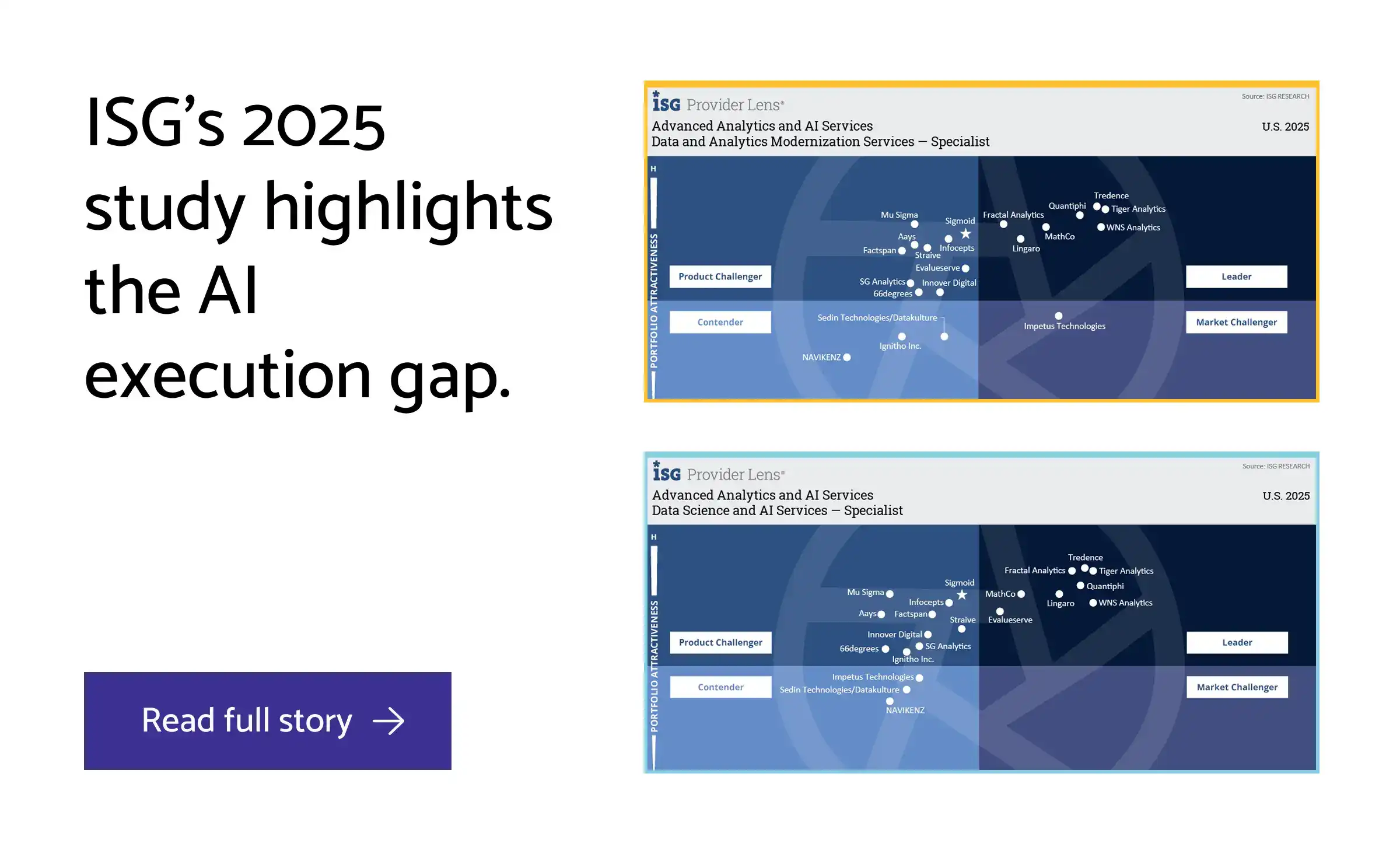

Recent analyst insights show enterprises consolidating data tools while prioritizing AI readiness and cloud cost control, making platform selection more strategic than ever. Consider an Azure-first enterprise with expanding ELT workflows, growing BI demands, and emerging ML requirements: they might standardize on Synapse for familiar SQL analytics, adopt Databricks for advanced data science, or run both platforms efficiently on Azure Data Lake. The right answer isn't "which platform is universally better"—it's which fits your specific workloads, operating model, and business constraints.

👉 We have already compared Microsoft Fabric vs Azure Synapse. Check out!

Databricks vs Azure Synapse at a Glance: Where the Platforms Differ Most

Azure Synapse and Databricks represent fundamentally different architectural philosophies: Synapse is a managed SQL data warehouse with embedded Spark capabilities, while Databricks is managed Apache Spark optimized for data engineering and machine learning. This distinction shapes every downstream decision about workload suitability, team structure, and ecosystem fit.

Decision Area | Databricks | Azure Synapse | Best Fit Signal |

Core purpose | Big data processing, ML/AI, lakehouse architecture with Delta Lake ACID transactions | Data warehousing, structured analytics, BI reporting, ETL/ELT pipelines | Choose Databricks for exploratory analytics and ML; Synapse for governed reporting and compliance-heavy environments |

Processing Engine & Compute Model | Optimized Apache Spark runtime with auto-scaling clusters; pay-per-use DBUs | Dual engine: SQL pools (dedicated/serverless) + Spark pools; DWU or serverless pay-per-query | Databricks for variable, unpredictable workloads; Synapse for predictable, structured queries |

SQL & Data Warehousing | Photon-optimized Spark SQL; secondary to core Spark focus | Purpose-built SQL engine with MPP architecture; native T-SQL support | Synapse for SQL-first teams and legacy warehouse migrations; Databricks for SQL as a secondary interface |

Data Engineering & ETL/ELT | Strong; Spark-native transformations with Delta Lake reliability | Built-in Synapse Pipelines for orchestration; integrates Azure Data Factory | Databricks for complex transformations; Synapse for pipeline orchestration within Azure |

Real-Time Streaming | Unmatched; Spark Streaming handles high-volume, low-latency workloads | Supported via Synapse Spark; integrates Azure Stream Analytics | Databricks for mission-critical real-time analytics; Synapse for supplementary streaming |

ML, Notebooks & AI Workflows | Strong ML ecosystem: MLflow, AutoML, Feature Store, Model Serving, Unity Catalog | Limited; integrates Azure Machine Learning; T-SQL focus limits data scientist productivity | Databricks for ML-centric teams; Synapse for BI-centric organizations |

Azure Ecosystem Integration | Multi-cloud capable (Azure, AWS, GCP); integrates Azure services but not Azure-first | Deep Azure integration: Power BI, Data Lake Storage, Cognitive Services, seamless workspace | Synapse for Azure-locked organizations; Databricks for hybrid/multi-cloud strategies |

Pricing & Cost Predictability | Pay-per-use (DBUs); scales with consumption; unpredictable for variable workloads | Mixed: fixed (dedicated pools) + serverless (pay-per-query); more predictable | Synapse for budget-constrained, predictable workloads; Databricks for growth-stage, variable demand |

Capabilities that change the decision fastest

Three architectural differences create the clearest separation between platforms. First, Databricks' Delta Lake brings ACID guarantees to data lakes, enabling reliable ML pipelines on unstructured data, while Synapse remains optimized for structured, relational data. Organizations processing semi-structured data—IoT sensors, application logs, images—should favor Databricks; those with clean, tabular data benefit from Synapse's SQL-first design.

Second, Databricks' Spark Streaming significantly outperforms Synapse for high-volume, low-latency workloads. This becomes non-negotiable for financial trading, fraud detection, or IoT analytics where milliseconds matter. Third, organizational fit often trumps feature parity—Synapse suits SQL analysts and BI teams, while Databricks attracts data engineers and data scientists.

Can I Use Azure Synapse and Databricks together?

If you want to use Synapse and Databricks together, be well aware of the confusions due to overlap. Some organizations attempt to use one platform for both analytical and engineering roles. Synapse forces data scientists into SQL constraints, while Databricks requires analysts to adopt notebooks and Spark SQL. A retail company analyzing point-of-sale transactions should use Synapse for BI dashboards and Power BI integration, but the same retailer building real-time inventory optimization using IoT streams and ML models should use Databricks—potentially sharing processed data back to Synapse via shared Delta Lake storage for analyst access.

Which Platform Wins for Your Workloads? SQL, Spark, Streaming, and ML

The right platform choice often comes down to matching your dominant workloads with each platform's core strengths. While both Databricks and Azure Synapse can handle diverse analytics tasks, their architectures favor different use cases—and your team's expertise plays as large a role as the technical features.

SQL-centric analytics and warehouse-first teams

Azure Synapse dominates traditional data warehousing with its dedicated and serverless SQL pools, massively parallel processing (MPP) architecture, and native Power BI integration. If your analytics workload centers on structured data, T-SQL expertise, and dashboard-driven reporting, Synapse's serverless model offers cost-effective pay-per-query pricing for ad hoc analysis.

Finance and BI teams running ETL/ELT pipelines on relational datasets will find Synapse's unified workspace more intuitive than learning Spark SQL semantics. The platform's Azure ecosystem integration means existing SQL Server skills transfer directly, and governance follows familiar patterns.

Best fit: Choose Synapse when your primary need is warehouse-scale analytics with strong SQL tooling and your team already thinks in terms of traditional data warehousing patterns.

Data engineering, streaming, and Spark-native pipelines

Databricks excels in big data engineering through its highly optimized Apache Spark runtime, Delta Lake for ACID transactions, and auto-scaling clusters that handle variable workloads efficiently. For streaming analytics, Structured Streaming provides low-latency processing of diverse data types—from JSON logs to IoT sensor feeds.

Manufacturing or retail companies processing real-time data streams benefit from Databricks' native Spark optimization and time travel capabilities for data lineage auditing. The platform handles schema evolution and mixed file formats better than Synapse's Spark pools, which lag in both optimization and streaming performance.

Best fit: Choose Databricks when you're building Spark-native data lakes, processing high-volume streams, or need advanced transformation logic on semi-structured data.

Machine learning, notebooks, and collaborative development

Databricks hands down win when it comes to ML infrastructure with MLflow for experiment tracking, AutoML for citizen data scientists, Feature Store for reusable features, and multi-language notebooks supporting Python, Scala, R, and SQL. Git integration enables proper version control, while Unity Catalog provides enterprise-grade governance across ML workflows.

Synapse supports basic machine learning through Azure ML integration but lacks the depth of Databricks' MLOps capabilities. Data science teams building recommendation engines, forecasting models, or computer vision applications will find Databricks' collaborative repos and model serving infrastructure more mature.

Best fit: Choose Databricks when machine learning is central to your analytics strategy and you need notebook-driven collaboration with robust MLOps support.

📍 Suggested read: Microsoft Fabric vs Databricks: What to choose for analytics and AI workloads

The Real Decision Criteria: TCO, Governance, Team Skills, and When a Hybrid Stack Wins

Moving beyond feature checklists, your platform choice ultimately depends on business realities that affect long-term success. Recent FinOps research shows that tooling decisions often fail due to poor cost management and operational complexity, rather than missing capabilities. The right evaluation framework addresses these implementation trade-offs head-on.

A 5-point enterprise selection framework

1. Workload pattern and concurrency

Signals favoring Databricks: Bursty, experimental workloads with auto-scaling clusters; variable data engineering loads requiring elastic compute

Signals favoring Azure Synapse: Stable, predictable SQL analytics with capacity-based SCUs enabling up to 28% savings via pre-purchase commitments

Cost implication: Without FinOps discipline, Databricks consumption can escalate quickly; Synapse overprovisioning inflates TCO for variable loads

2. Team skills and development style

Signals favoring Databricks: Spark/ML expertise; collaborative notebooks suit data engineers handling unstructured, real-time data (more details)

Signals favoring Azure Synapse: SQL/Power BI proficiency; low-friction adoption for Microsoft ecosystem teams

Cost implication: Skill gaps drive retraining costs and erode productivity during adoption phases

3. Governance and security operating model

Signals favoring Databricks: Granular Unity Catalog for diverse, multi-cloud workloads; advanced lineage across complex engineering pipelines

Signals favoring Azure Synapse: Seamless Microsoft Purview integration with unified Azure AD controls for SQL/BI-centric teams

Cost implication: Immature governance amplifies Databricks setup complexity; Synapse simplifies but limits non-Azure flexibility

4. Cost optimization and spend predictability

Signals favoring Databricks: FinOps-mature teams that optimize consumption-based DBUs effectively through monitoring and automation

Signals favoring Azure Synapse: Need for budgeting confidence with predictable capacity costs and enterprise agreement discounts

Cost implication: Poor consumption management turns Databricks flexibility into cost escalation; Synapse predictability can mask inefficient resource allocation

5. Future-state architecture, including hybrid considerations

Signals favoring Databricks: Multi-cloud portability requirements; advanced AI/ML pipelines that exceed Azure-native platform limits

Signals favoring Azure Synapse: Deep Azure integration reduces latency and operational overhead for consolidated cloud strategies

Cost implication: Single-platform lock-in creates vendor risk; hybrid architectures demand federation tools that increase integration complexity

When using both Databricks and Synapse is the smarter move

Many enterprises successfully leverage both platforms—Synapse for SQL-facing analytics and daily reporting, Databricks for engineering-heavy ML pipelines and streaming data processing. This hybrid approach minimizes replatforming risks while optimizing costs through intelligent workload routing. A retail company might use Synapse for transactional analytics and customer dashboards while Databricks processes streaming IoT data for real-time personalization, maximizing each platform's strengths without forcing an artificial consolidation that compromises performance or team productivity.

The datakulture Advantage: Vendor-Agnostic Guidance for Databricks vs Azure Synapse Decisions

Choosing between Databricks and Azure Synapse often gets derailed by vendor bias, incomplete cost modeling, and surface-level technical comparisons. Datakulture helps enterprises cut through the noise with a structured, business-aligned evaluation process that prioritizes workload fit and long-term ROI over platform hype. Rather than pushing a predetermined answer, we guide you toward the architecture that actually serves your team's operating model, cost targets, and technical requirements.

Vendor-agnostic guidance

Platform selection shouldn't be driven by existing Azure commitments or Spark preferences alone. datakulture maps your workloads to neutral, open standards like Apache Spark, dbt, and multi-cloud storage, ensuring you choose based on technical fit rather than ecosystem bias. This approach prevents costly misalignments, like forcing SQL-heavy BI teams onto Databricks notebooks or trying to run complex ML pipelines through Synapse's more limited Python environment.

Deeply technical platform evaluation

Surface-level comparisons miss critical nuances that affect real-world performance and developer experience. We dig into specifics like Synapse's limited recursive CTE support versus Databricks' Photon engine optimizations for high-concurrency queries, helping you understand which platform actually delivers for your workload patterns. Our evaluations include hands-on testing of notebook collaboration, streaming latency, and SQL query performance across realistic data volumes.

Cost optimization

Opaque pricing models—Synapse's DWU-based billing versus Databricks' job-based compute—make cost prediction nearly impossible without proper modeling. datakulture builds detailed TCO projections that account for elastic cluster scaling, materialized view caching, and workload concurrency patterns. We've helped clients avoid 30-50% cost overruns by right-sizing compute resources and implementing proper auto-scaling policies before platform rollout.

Data platform modernization

Legacy modernization rarely requires a complete platform rip-and-replace. We guide organizations toward coexistence strategies that leverage both platforms strategically—like orchestrating Databricks notebooks through Synapse Pipelines or using shared Delta Lake storage for seamless data flow between platforms. This approach reduces migration risk while preserving existing investments in SQL skills and Power BI integrations.

Analytics engineering

Team workflows matter as much as platform features when it comes to development velocity and code quality. We help you align tooling choices with actual development patterns—whether that means implementing dbt workflows in Synapse for SQL-first teams or building parameterized notebook libraries in Databricks for Python-heavy data science groups. The goal is removing friction from your team's daily work, not forcing them to adapt to platform constraints.

FinOps

Multi-platform architectures require disciplined cost governance to prevent runaway spending and resource sprawl. datakulture implements FinOps practices like pay-per-job metering, automated scaling policies, and cross-platform cost allocation that give you visibility and control over your data platform spend. We've seen organizations achieve 25-30% cost reductions simply by implementing proper resource tagging and idle cluster detection.

Working with datakulture means making platform decisions based on measurable business impact rather than vendor promises or internal assumptions. Our technical depth and vendor-agnostic approach ensure you get an architecture that actually fits your constraints and grows with your business needs.

Conclusion: Choose the Platform That Fits Your Operating Model, Not Just the Feature List

The Databricks vs Azure Synapse decision ultimately comes down to strategic alignment between your platform choice and business operations, not feature parity comparisons. The platform that wins is the one that matches your workload patterns, supports your team's development style, fits your governance requirements, and delivers predictable TCO within your cost optimization framework. Whether that's Databricks for engineering-heavy ML workflows, Azure Synapse for SQL-centric analytics, or a hybrid approach leveraging both platforms depends entirely on your specific operating model.

The decision framework we've outlined—evaluating workload fit, team skills, governance maturity, cost discipline, and future architecture—gives you the structure to make this choice systematically rather than chasing platform hype or vendor promises. This is where datakulture's vendor-agnostic approach proves valuable: we help enterprises assess these real-world factors and design platform strategies that align operations, technology, and business outcomes rather than just checking feature boxes.

The right platform decision accelerates your data initiatives and reduces operational complexity. The wrong one locks you into expensive re-platforming cycles and team productivity issues. If you need structured guidance to evaluate Databricks vs Azure Synapse within your specific business context, talk to datakulture.

Frequently Asked Questions

1. What's the main difference between Databricks and Azure Synapse?

Databricks focuses on Spark-centric data engineering, ML, and lakehouse architectures, while Azure Synapse emphasizes data warehousing, ETL, and integrated analytics. Synapse uses SQL pools with MPP for structured queries and Spark pools, whereas Databricks optimizes Apache Spark runtimes with Delta Lake for distributed workloads. Both integrate with Azure services, but Databricks offers multi-cloud flexibility while Synapse provides deeper Azure-native integration.

2. Is Azure Synapse better for SQL data warehousing?

Yes, Azure Synapse excels in SQL data warehousing with its dedicated SQL pools providing MPP architecture, query caching, and tight Power BI integration. It supports T-SQL for enterprise BI and reporting on structured data with familiar SQL Server semantics. Databricks handles warehousing via Delta Lake but lacks the full T-SQL depth and traditional BI tooling integration that many enterprise teams expect.

3. Is Databricks better for ETL and big data engineering?

Yes, Databricks is superior for ETL and big data engineering due to its optimized Spark engine, notebooks, and streaming capabilities for complex pipelines. It integrates with MLflow for advanced transformations on semi-structured data and provides collaborative notebook environments that data engineers prefer. Synapse offers ETL via Data Factory but is less flexible for high-scale Spark workloads requiring custom transformations.

4. Can Databricks and Azure Synapse be used together?

Yes, they complement each other in hybrid setups, with Synapse handling warehousing and BI, while Databricks manages engineering and ML on shared Azure Data Lake storage. Many enterprises use both for end-to-end workflows—Databricks for data preparation and feature engineering, then Synapse for consumption and reporting. Integration via Azure services like Data Factory enables seamless data movement between platforms.

4. Which platform is cheaper at enterprise scale: Synapse or Databricks?

Costs vary by workload: Synapse offers predictable pricing for consistent warehousing via provisioned pools, while Databricks is cost-effective for variable, high-intensity Spark tasks via DBU usage. At scale, optimize Synapse for steady BI workloads and Databricks for bursty processing—real-world audits show 20-30% savings with hybrid approaches. The key is matching compute patterns to pricing models rather than choosing based on list prices alone.

5. Is Azure Synapse still strategic if we're also considering Microsoft Fabric?

Yes, Synapse remains strategic for dedicated SQL warehousing and legacy workloads, even as Fabric unifies analytics capabilities. It provides mature MPP features and enterprise governance controls not fully replicated in Fabric yet. Teams often transition incrementally while leveraging Synapse's proven Azure ecosystem integration, treating it as a bridge to future Fabric adoption rather than a dead-end investment.

6. How long does a migration or implementation usually take?

Implementations take 3-6 months for initial setups, with migrations spanning 6-12 months depending on data volume and complexity. Warehousing migrations to Synapse average 4 months with Data Factory integration, while Databricks lakehouse setups are faster (2-4 months) for teams with existing Spark expertise. Timelines extend with custom integrations, compliance requirements, or complex legacy system dependencies that require careful data validation and cutover planning.

by Subu

With over two decades of experience, Subu, aka Subramanian, is a senior solution architect who has built data warehousing solutions, led cloud migration projects, and designed scalable single sources of truth (SSOTs) for global enterprises. He brings a wealth of knowledge rooted in years of hands-on expertise while constantly updating himself on the latest technologies. Beyond architecture, he leads and mentors a large team of data engineers, ensuring every solution is both future-ready and reliable.