Databricks vs BigQuery: Cost, Performance & Architecture Compared

A practical comparison of Databricks and BigQuery covering cost, performance, architecture, and real-world use cases. Understand how each platform handles data engineering, analytics, and scaling so you can choose the right fit for your team and workload.

Subu

Apr 30, 2026 |

8 mins

Introduction: What Databricks and BigQuery Are—and Why This Decision Matters

When technical teams evaluate modern data platforms, the choice between Databricks and BigQuery often comes down to fundamentally different architectural philosophies. Databricks operates as a unified lakehouse platform built on Apache Spark, designed to handle data engineering, analytics, and machine learning workflows within a single environment that supports multiple programming languages and open data formats. BigQuery, by contrast, is a fully managed, serverless cloud data warehouse optimized specifically for large-scale SQL analytics with automatic scaling and built-in machine learning capabilities.

This platform decision carries significant weight for data teams because it shapes not just immediate technical capabilities, but long-term operational patterns around cost control, team skill requirements, and architectural flexibility. A platform owner modernizing an enterprise stack faces a choice between BigQuery's streamlined SQL analytics experience for business users and Databricks' broader environment designed for engineering-heavy, ML-driven workloads that span batch processing, streaming, and collaborative data science.

The real decision criteria extend beyond feature comparisons into practical business considerations: how your architecture handles mixed workloads, whether your team prefers serverless simplicity or lakehouse flexibility, how pricing models align with your usage patterns, what skills your team brings to platform operations, and how much operational complexity you're prepared to manage. Understanding which platform delivers better business outcomes for your specific data strategy, team structure, and cost optimization goals is crucial.

Understanding these trade-offs upfront prevents expensive platform decisions that look good on paper but create operational friction, unexpected costs, or capability gaps when deployed at scale.

Databricks vs BigQuery at a Glance: Where the Trade-Offs Actually Show Up

The choice between these platforms' hinges on fundamental differences in architecture, cost behavior, and operational complexity. This comparison matrix breaks down the key decision criteria that matter most to data teams evaluating long-term platform fit.

Decision Area | Databricks | BigQuery | Business implication |

Core architecture | Lakehouse on Apache Spark with managed clusters, multi-cloud support | Serverless with separated storage (Colossus) and compute (Dremel), GCP-native | Databricks suits engineering teams needing cloud flexibility; BigQuery favors GCP-committed analytics teams wanting zero infrastructure management |

Primary workload strengths | ML pipelines, streaming, batch processing via Photon/Delta optimizations | Ad-hoc SQL analytics, BI dashboards, auto-slot allocation for predictable queries | Data science and engineering-heavy workloads lean Databricks; BI-centric teams with SQL-first needs favor BigQuery |

Pricing model | DBUs plus underlying cloud infrastructure costs, cluster-based with reservations | Per-TB scanned on-demand or flat-rate slot pricing, separate storage costs | BigQuery rewards query optimization and narrow scans; Databricks requires cluster management discipline to control costs |

Performance pattern | Consistent for complex transformations, requires cluster tuning for optimal concurrency | Excellent for analytical queries under 100GB, auto-scaling slots handle spikes | Predictable large-scale ETL favors Databricks; variable analytical workloads suit BigQuery's serverless model |

AI/ML Capabilities | Native MLflow integration, Delta Lake, full model lifecycle management | BigQuery ML for SQL-based models, Gemini integration for analysts | Advanced ML teams choose Databricks for flexibility; business analysts prefer BigQuery’s SQL-native approach |

Ease of Operations | Requires Spark expertise, cluster management, Unity Catalog governance setup | SQL-first interface, auto-managed infrastructure, IAM-based security | Small teams favor BigQuery’s simplicity; engineering organizations can leverage Databricks’ configurability |

Ecosystem and portability | Multi-cloud, open formats (Delta Lake), extensive connector library | GCP-optimized, strong integration with Google Workspace and Analytics suite | Multi-cloud strategies suit Databricks; GCP-committed organizations maximize BigQuery integration value |

Governance and security posture | Unity Catalog for lineage and sharing, requires configuration | Built-in column-level security, automatic encryption, simpler compliance setup | Databricks offers granular control for complex governance; BigQuery reduces compliance overhead for standard use cases |

These trade-offs reveal that "better" depends entirely on your team's workload shape, cloud strategy, and operational tolerance. BigQuery optimizes for analytical simplicity and cost predictability, while Databricks provides broader platform capabilities at higher operational complexity.

Architecture and compute model

The architectural divide shapes everything else. Databricks builds on Spark clusters you configure and scale, giving you control over compute resources but requiring infrastructure decisions. BigQuery abstracts all infrastructure behind a serverless interface—you send SQL, it returns results, with automatic scaling handled invisibly.

Performance and workload behavior

Performance patterns reflect these architectural choices. Databricks delivers consistent throughput for complex, multi-stage data pipelines where you can optimize cluster configurations for specific workloads. BigQuery excels at analytical queries that scan moderate data volumes, with automatic slot allocation handling concurrent users without manual tuning.

Pricing logic and operational burden

Cost structures mirror the complexity trade-off. BigQuery's per-TB scanning model rewards well-designed queries and benefits teams with predictable analytical patterns. Databricks' DBU plus infrastructure model requires active cluster management to avoid waste, but offers more control over cost optimization strategies for engineering workloads.

A Practical Decision Framework: Choose by Workload, Team, and Time-to-Value

The choice between Databricks and BigQuery isn't about feature checklists—it's about matching platform strengths to your operational reality. Here's a decision framework that cuts through vendor positioning to focus on workload patterns, team capabilities, and business outcomes that actually matter.

Choose Databricks if...

Your workloads extend beyond SQL analytics. If you're processing unstructured data, running real-time streaming pipelines, or building ML models alongside traditional BI, Databricks' Spark-based lakehouse architecture handles the complexity better than trying to force everything through a SQL-first warehouse. Manufacturing companies tracking IoT sensor data or media firms processing video content typically see faster time-to-insight with unified data engineering.

You have code-first data teams. Teams comfortable with Python, Scala, or Spark notebooks will leverage Databricks' collaborative environment for both engineering and AI workloads. Data science teams report 2-3x faster model deployment when they can prototype, train, and operationalize models in the same platform rather than stitching together separate tools.

Cluster control and concurrency matter for your SLAs. When you need predictable performance for operational applications or want to optimize compute for specific workload patterns, Databricks' autoscaling clusters give you the control to tune performance and costs. E-commerce platforms running real-time recommendation engines often need this level of infrastructure management.

Multi-cloud strategy or vendor lock-in concerns drive architecture decisions. Delta Lake's open format approach ensures that your data assets remain portable across cloud providers, which matters for enterprises with regulatory requirements or strategic flexibility needs.

📍 Also read: Snowflake vs Databricks

Choose BigQuery if...

SQL analytics and BI dominate your data workloads. For teams primarily running dashboards, reports, and ad-hoc queries against structured data, BigQuery's serverless architecture eliminates infrastructure management while auto-scaling to handle massive scans. Marketing teams analyzing campaign performance across terabytes of event data typically achieve faster ROI with zero ops overhead.

Your team consists mainly of SQL-focused analysts. If your data professionals are comfortable with ANSI SQL but lack Python or Spark expertise, BigQuery's familiar query interface with flat-rate pricing slots delivers predictable costs and performance. BI dashboards load 5x faster for retail reporting teams who can optimize their SQL rather than learning new programming paradigms.

You're standardized on Google Cloud Platform. Native GCP integration means seamless connections to Vertex AI, Cloud Storage, and other Google services without egress fees or complex networking. Finance firms processing regulatory data often benefit from this tight ecosystem integration and simplified security model.

Quick wins matter more than long-term platform flexibility. When business stakeholders need analytics capabilities in weeks rather than months, BigQuery's serverless model delivers immediate value for optimized queries while you assess longer-term data strategy needs.

📍 Also read: Snowflake vs Bigquery

Consider a hybrid or phased approach if...

You have genuinely mixed workload requirements. Many organizations use BigQuery for SQL-heavy BI while running ETL and ML workloads on Databricks, with federated queries bridging the platforms. Media companies often achieve 25% cost reductions by matching each workload to its optimal platform rather than forcing everything into a single solution.

Team skills are evolving or budget constraints require staged adoption. Start with BigQuery for immediate BI needs while building Databricks expertise for future ML initiatives, or vice versa. Healthcare providers frequently validate their data strategy on real workloads before committing to full platform migration.

Your concurrency and governance requirements are still being defined. Begin with serverless BigQuery to understand query patterns, then add Databricks clusters for peak loads or specialized processing. This approach lets you optimize spending based on actual usage rather than theoretical capacity planning.

The Hidden Decision Layer: Total Cost, Governance, and Long-Term Flexibility

Platform selection conversations often focus on feature matrices and list pricing, but the real financial impact emerges from operational patterns, governance maturity, and implementation discipline. Smart buyers dig deeper into total cost behavior, ecosystem lock-in risks, and the organizational changes required to extract value from either platform.

Total cost is more than compute pricing

Your actual spend will likely double initial estimates once you factor in storage growth, data movement, professional services, and the biggest cost driver: inefficient usage patterns. BigQuery's serverless model seems simple until undisciplined queries inflate slot consumption by 2-3x, turning predictable budgets into monthly surprises. Databricks appears cost-controlled until cluster cold starts and idle resources add $50K-$200K annually at enterprise scale. Unoptimized workloads consistently drive 200-300% budget overruns in both platforms, making FinOps discipline more valuable than vendor rate negotiations.

Governance and ecosystem fit affect adoption

Long-term platform value depends heavily on data ownership models and ecosystem integration. Databricks enables data ownership through open formats like Delta Lake in your own storage, reducing portability risk compared to BigQuery's proprietary ties to GCP services. However, governance maturity often matters more than technical architecture—poor standardization across teams creates rework that dominates long-term spend regardless of which platform you choose. Ecosystem lock-in amplifies TCO through talent mismatches, integration complexity, and the hidden costs of working around vendor limitations as your needs evolve.

Migration and operating model changes shape ROI

The biggest ROI killer isn't technical migration complexity—it's organizational ownership gaps that emerge post-implementation. Self-managed Databricks clusters demand rigorous resource policies to prevent runaway costs, while BigQuery's serverless ease can mask expensive query inefficiencies until monthly bills arrive. A mid-market firm switching to Databricks saw a 150% compute overrun from unmonitored ML experiments and missing FinOps quotas, delaying ROI by six months. Clear ownership for optimization, monitoring, and cost discipline determines whether your platform investment delivers value or becomes an expensive learning experience that requires expensive consulting fixes later.

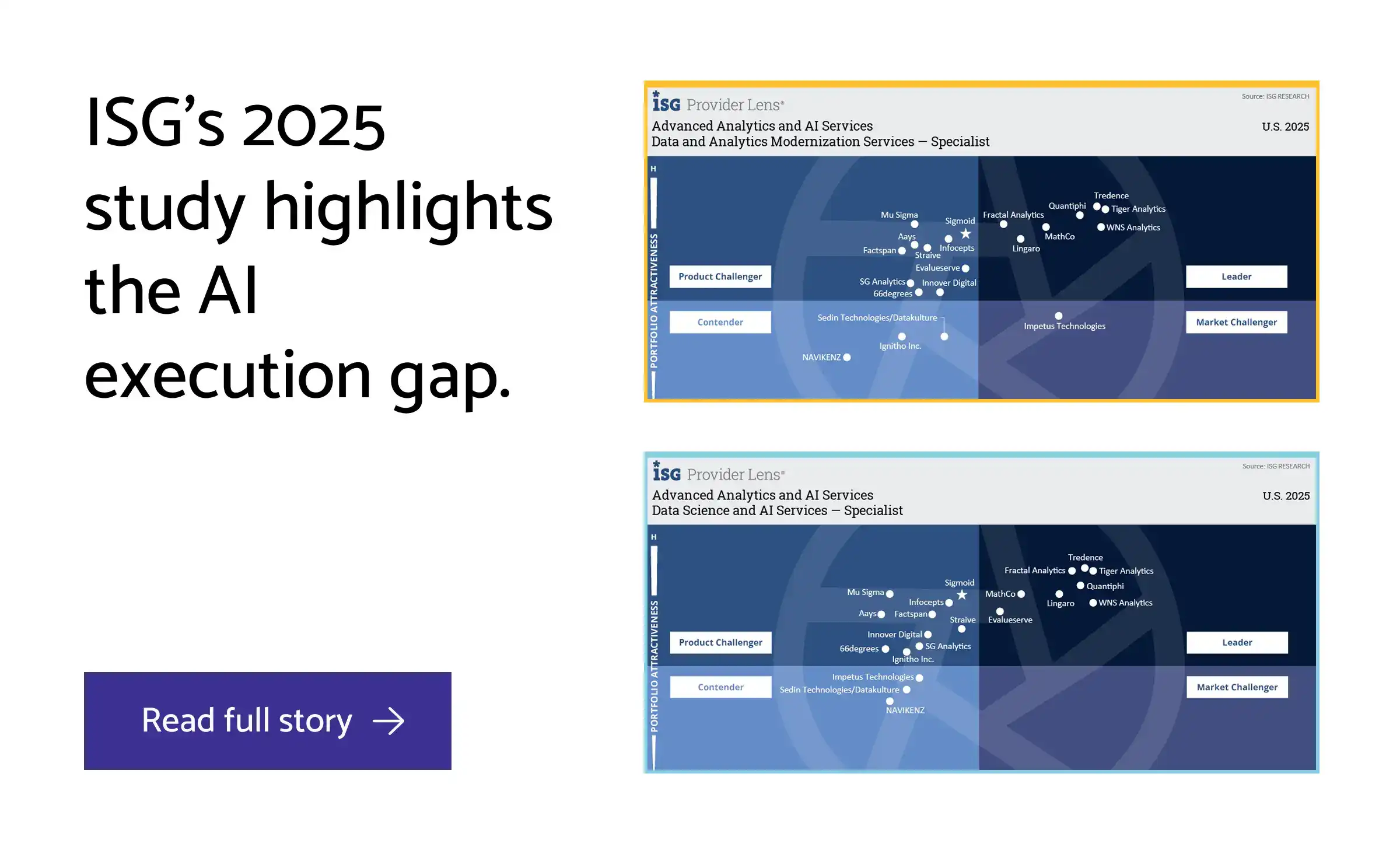

How datakulture Addresses Databricks vs BigQuery Challenges

The platform trade-offs between BigQuery's serverless analytics and Databricks' lakehouse flexibility create real decision paralysis for data teams. Evaluation fatigue, cost uncertainty, and implementation risk compound when organizations lack vendor-agnostic guidance. datakulture addresses these pain points through deep technical specialization in both platforms, helping teams navigate architecture decisions without the bias of vendor-led consulting or generic cloud advisory.

Vendor-agnostic platform guidance

datakulture provides impartial assessments that weigh BigQuery's automatic slot-based compute against Databricks' collaborative notebooks and horizontal scaling based on your actual workload patterns. Rather than defaulting to ecosystem preferences, we match technical requirements—like real-time dashboard concurrency or ML pipeline complexity—to platform strengths. This neutral stance eliminates vendor lock-in risks and reduces selection cycles, helping CTOs make architecture decisions grounded in business context rather than sales narratives.

Cloud data stack design, build, and optimization

datakulture engineers integrated stacks that leverage BigQuery's columnar storage efficiency alongside Databricks' Delta Lake optimizations, solving the integration challenges that emerge across ETL, streaming, and analytics workflows. When evaluating petabyte-scale needs, we design hybrid architectures that balance BigQuery's Dremel query speed with Databricks' Photon engine for different workload types. Our approach addresses the real-world complexity of connecting serverless analytics with engineered data pipelines, delivering measurably faster deployment cycles.

Cost optimization

datakulture analyzes the cost behavior differences between BigQuery's on-demand versus flat-rate pricing and Databricks' cluster autoscaling, identifying overprovisioning patterns and idle resource waste specific to each platform. We implement query optimization techniques—partition pruning for BigQuery, cluster sizing for Databricks—that address unpredictable scaling costs. Our cost modeling goes beyond rate cards to forecast actual spend based on query patterns, data growth, and team usage behaviors.

FinOps

datakulture applies FinOps principles to monitor and optimize the distinct resource consumption patterns of each platform, addressing practical limits like BigQuery's 100 concurrent user capacity and Databricks' 10 users per cluster. We build real-time cost dashboards that track reserved versus autoscaled spend, enabling predictable budgeting across different compute models. Our FinOps approach delivers ongoing visibility into platform efficiency, preventing the cost surprises that often emerge months after initial implementation.

Data platform modernization

datakulture modernizes legacy systems into BigQuery ML or Databricks Lakehouse architectures, resolving the tunability gaps where BigQuery prioritizes operational simplicity over Spark customization flexibility. We design migration paths that preserve existing investments while unlocking platform-specific capabilities—whether that's BigQuery's serverless scaling or Databricks' unified analytics environment. Our modernization approach balances technical advancement with operational stability, ensuring performance improvements without disrupting business continuity.

Analytics engineering

datakulture streamlines analytics engineering workflows by optimizing the distinct development paradigms of each platform—Databricks' notebook-based collaboration for ML and BigQuery's SQL-centric processing for BI workloads. We address the learning curve challenges that slow team adoption, implementing development patterns that maximize each platform's strengths. Our analytics engineering expertise reduces development friction, helping teams achieve faster iteration cycles and more reliable data products regardless of platform choice.

datakulture's proven capabilities in both platforms enable confident decisions backed by implementation expertise. Whether you're evaluating trade-offs or optimizing an existing choice, our vendor-agnostic approach delivers measurable business impact.

Conclusion: The Best Platform Is the One Your Team Can Operate, Optimize, and Scale

The choice between Databricks and BigQuery ultimately comes down to workload fit, operating model, and cost behavior—not just feature checklists. Databricks excels in complex ETL, streaming, and ML pipelines where you need cluster control and predictable scaling through DBUs plus cloud infrastructure costs. BigQuery suits interactive SQL, BI, and ad-hoc analytics with its serverless approach and scan-based pricing that can range from cost-effective to unpredictable depending on query patterns. The decision framework outlined earlier should guide you toward mapping your actual SLAs, concurrency requirements, and total cost of ownership—factoring in real data volumes, team capabilities, and migration complexity.

This is where datakulture's vendor-agnostic, technically grounded guidance proves valuable. We help teams navigate these tradeoffs without bias, ensuring you execute the right decision based on your specific operating realities rather than vendor narratives. If you're still weighing slots versus clusters, talent requirements, or long-term flexibility after comparing architectures and pricing models, an objective assessment beats defaulting to the loudest sales pitch.

Ready to align your platform choice with your business context? Talk to datakulture.

FAQ: Common Questions Asked About Databricks vs BigQuery

1. Is Databricks a data warehouse like BigQuery?

No, Databricks is a unified lakehouse platform built on Apache Spark for data engineering, ML, and analytics, while BigQuery is a fully managed, serverless data warehouse optimized for SQL queries. Databricks combines lake and warehouse features for flexibility across diverse workloads. This architectural difference matters if you need capabilities beyond pure SQL analytics.

2. Which is cheaper: Databricks or BigQuery?

It depends entirely on your usage patterns. BigQuery's on-demand pricing charges per data scanned, often cheaper for ad-hoc SQL queries, while Databricks uses cluster-based pricing that suits predictable ETL and ML workloads but can cost more for variable loads. BigQuery offers reserved slots for heavy, consistent usage. Run trials with your actual query patterns for accurate cost modeling.

3. Is BigQuery faster than Databricks for SQL analytics?

BigQuery often excels in ad-hoc SQL speed via its Dremel engine and BI Engine for reporting and dashboards, but Databricks matches or beats it for complex transformations using Spark's distributed processing. Performance varies significantly by query complexity, data size, and optimization. Test both platforms with your specific datasets and query patterns for meaningful comparisons.

4. Is Databricks better for machine learning and AI workloads?

Yes, Databricks leads with Mosaic AI, Unity Catalog, and comprehensive Spark integration for ML pipelines, model fine-tuning, and multi-language support, versus BigQuery's solid but more limited Gemini AI integration. Choose Databricks for engineer-led, complex AI workflows. BigQuery fits simpler ML needs within GCP-centric environments.

5. Which platform is easier for a small data team to manage?

BigQuery wins for small teams with its serverless architecture, zero infrastructure management, and straightforward SQL focus, while Databricks requires more expertise for cluster management and Spark optimization. BigQuery minimizes operational overhead and learning curve. Consider Databricks only if your small team has strong engineering skills and expanding ML requirements.

6. Can organizations use Databricks and BigQuery together?

Yes, through multi-cloud integrations like BigLake for federated access or using open data formats; Databricks runs on GCP and can work with BigQuery storage. This hybrid approach works well for SQL analytics in BigQuery and ML pipelines in Databricks. Open formats like Delta Lake help avoid lock-in while maximizing each platform's strengths.

7. How hard is it to migrate from one platform to the other?

Migration complexity is moderate and highly situational—SQL warehouses migrate more easily to BigQuery, while ETL and ML pipelines fit better in Databricks. Key challenges include SQL dialect differences, custom functions, and governance policies. Plan parallel runs and start with pilot workloads. Using open data formats from the beginning makes future platform shifts significantly easier.

by Subu

With over two decades of experience, Subu, aka Subramanian, is a senior solution architect who has built data warehousing solutions, led cloud migration projects, and designed scalable single sources of truth (SSOTs) for global enterprises. He brings a wealth of knowledge rooted in years of hands-on expertise while constantly updating himself on the latest technologies. Beyond architecture, he leads and mentors a large team of data engineers, ensuring every solution is both future-ready and reliable.