Best ETL Tools to consider: Features and pricing

ETL tools are crucial elements for data engineering teams. Read this blog and find some of the best ETL tools our seasoned data engineers use for flawless data transformation and robust data warehousing.

Suresh

June 6, 2025 |

7 mins

Key takeaways

The ideal factors you should consider other than the pricing and the type of the tool.

List of best ETL tools suggested by experienced data engineers.

The tools are selected based on the features, ease of use, cost, scalability, and integration options it offers.

Customer reviews and detailed feedback on popular platforms like G2, Get app, and other websites were also considered.

Tools are categorized based on their type - open source, cloud and enterprise.

Find the right fit for your organization, despite its size, data complexity, and requirements.

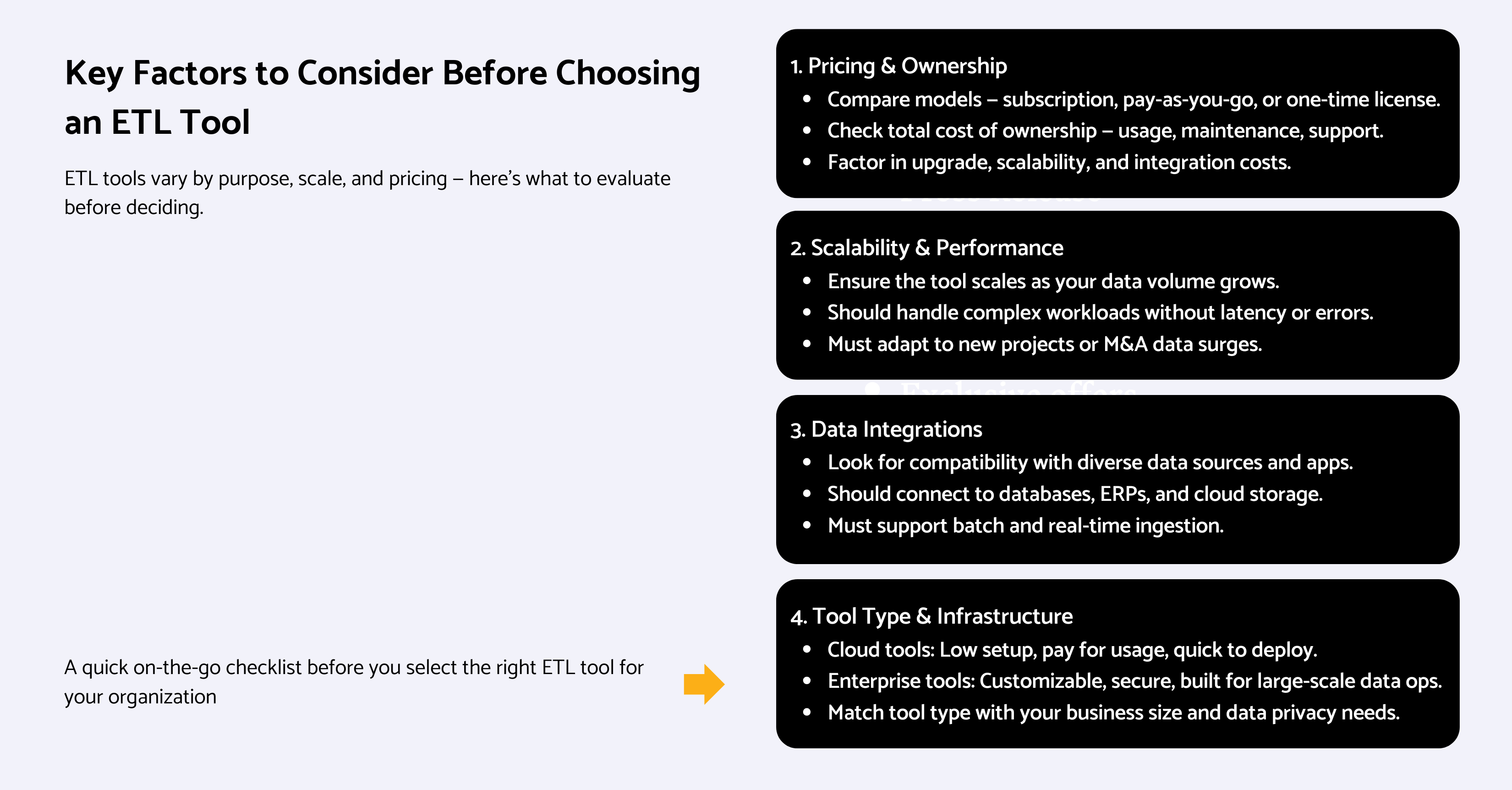

Consider the following before choosing ETL tools

What is ETL in data engineering?

ETL is Extract Transform Load, a data pipelining process that fetches data from sources, transforms to a desired format, and loads in the destination (data warehouse, data lakehouse, etc). Some factors you should consider while selecting an ETL tool to perform the above activities are as follows.

Pricing

ETL tools have different pricing plans. Subscription-based, pay-as-you-go, one-time licensing, freemium models, and many more. It depends on whether it’s an enterprise or a cloud version. The above pricing models include usage and maintenance costs, support, other add-on features, etc. So, it’s important to consider the upfront costs needed for the total cost of ownership.

Also note that there will be other costs for upgrades, high-end features, and scalability, or when you have to handle a specific type of data or custom integrations.

Scalable

Your ETL tool must support scalability no matter how much the data load increases in the future. That’s the way to future-proof your data infrastructure to support your business growth. Without scalable tools, you cannot upgrade your current capabilities and spend more than you planned. Also, data complexities and overload arise due to many reasons and the tool should be flexible enough to handle, without creating discrepancies. Ex: undertaking a new temporary project, mergers and acquisitions cases, etc.

Data integrations

The major functionality of ETL tools is data ingestion. To do that, it should be able to integrate with multiple data sources and platforms - cloud storage platforms, ERPs, enterprise applications, databases, and even real-time data streams given your data warehouse can handle that.

Tools types

Cloud-based tools are known for upfront costs, accessibility, and ease of use. Without investing in heavy hardware arrangements or support from backend IT teams, your company can use cloud-based ETL tools and pay only for usage and computing resources.

Hence, this is ideal for small and mid-sized businesses with low to medium requirements.

Companies with heavy data workloads, stringent regulations, and data privacy requirements can go for enterprise versions. They are one-time investments but can be customized to suit the business's operational needs.

Recommended read: What is ETL?

ETL & ELT - Can it perform both?

ETL and ELT are slightly different. The difference between ETL and ELT is that ETL does extract, transform, and load data into the destination, whereas ELT does transformation at last in the destination itself. Many modern tools do support both ETL and ELT workloads. While this might not be a crucial requirement, having a tool supporting ETL and ELT can be useful for complex pipeline management. Tools that support both ETL and ELT are Apache Nifi, Talend, Informatica, Azure Data Factory, etc.

Best ETL tools

"Putting out tools isn’t enough to ensure high-quality data in the end. When organizations have huge goals to set up high-end AI models, every potential error in data can exacerbate and deviate the model’s outcomes. So, to avoid expensive errors like this, data engineers hold huge responsibility to build robust ETL and BI models and choose tools wisely." - A quote from our data engineering team

Hevodata

Hevodata is a cloud-based data pipelining platform. Trusted and used by over 2500 data teams, it’s one of the best ETL automation tool options for small and mid-sized companies. Users say that Hevo helps them set up API connectors quickly even for large datasets.

Features

150+ ready-to-use integrations

Can connect with databases, BI platforms, and cloud storage (GCP, Amazon S3, etc).

Make data instantly available for analytics the moment it lands the warehouse.

Ability to manage data stored across different geographical locations with one account.

Friendly user interface and drag-and-drop options.

Auto-cleaning and formatting of data before it reaches the destination, so faster access to analytics guaranteed.

Pricing

Basic version free for all users

Starter model costs $249

For enterprise requirements, contact the sales team.

Talend Open Studio (TOS)

Being acquired by Qlik, Talend helps with data integration and ETL for data warehousing, analytics, and AI projects. It ensures accurate and real-time data availability and works with a ton of data sources. And it has been featured consistently by industry experts like Gartner, Forester, etc.

It’s more than an ETL tool and act like a flexible, enterprise-grade data management platform.

Suitable for: Enterprises looking for end-to-end data management solutions

Features

Comes with a connector factory to connect with 400+ sources and targets (cloud, on-prem, and hybrid environments) with drag-and-drop interfaces.

Supports integration with big data components like Apache Spark, and NoSQL databases for seamless big data processing.

Talend also integrates with SQL databases like MySQL, Oracle dbms, PostgreSQL, and SQL Server, supporting database processing like cleaning and transforming data.

Can enable triggers and alerts upon successful ETL job execution

IDE (Integrated Development Environment) where you can handle the entire lifecycle of data integration from design to testing.

Has profiling and cleansing tools to clean up duplicate data and missing values.

Facilitates smooth team collaboration and offers tons of learning opportunities through tutorials, learning videos, and an actively growing community.

Pricing

Open-source TOS was available for free, but not anymore. Request Talend sales team for pricing of its commercial data management platforms.

Airbyte

Airbyte is an ETL tool available in both cloud and self-managed open-source formats. It solves integration challenges for data engineers. It is suitable for both small and large organizations, as it comes with enterprise and cloud versions and different payment plans.

Features

More than 300 sources and 50+ destinations are available, including data lakes, data warehouses, vector databases, etc.

Connectors are customizable. Customers can use existing connectors (up to 300) or build one using their no-code connector builder platform or low-code CDK platform.

Integrates with dev platforms seamlessly. Examples: Prefect, Dagster, dbt, etc.

Can extract and load data to suit your requirements - with incremental, batch, and full-refresh loading.

Sync frequency is less than 5 minutes, except for the cloud version which is one hour.

Pricing

Monthly payments for the cloud and annual contracts for the teams and enterprises. Contact their sales for pricing.

Cloud pricing is based on the data volume.

Has a free open-source version that you can deploy right away.

Integrate.io

Integrate as the name stands for helps with integration. Other than ETL, you can also use it for CDC (Change Data Capture), data monitoring and alert activation, and REST API generation. It’s suitable for small and medium sized organizations who are looking for low-code and low-cost ETL platforms.

Features

Has both ETL and reverse ETL workloads available with drag-and-drop options.

Performs in-pipeline transformations like data aggregation, filtering, sorting, and many other custom options using Python scripts.

Can get pipeline alerts on Slack, email, etc.

Both pre-built connectors and customizable REST API connectors available.

Can schedule ETL function on different time intervals.

Pricing

Based on your data load, you could choose their plans - starters, professional, expert, and business critical. Credit cost vary for all four plans. For starters, it is $2.99 and for custom, it is $0.83. Check detailed breakdown of plans.

Could opt for managed services too out of all the four plans available.

14 day trial available.

Matillion ETL

Matillion is a cloud-based ETL tool which comes with data orchestration, ETL & transformation, and pipeline management functionalities. With comprehensive functions, it’s suitable for stable mid-sized and enterprise level organizations with complex data workloads and numerous sources and destinations.

Features

No-code, easy-to-use interface that allows you validate real-time data transformations for high accuracy.

Contains 70+ pre-built connectors for sources like RedShift, Snowflake, Google BigQuery, etc. Due to this, it’s more apt for companies with cloud data warehouses. Custom data connectors are also available.

Comes with built-in GIT repository for version control.

Supports team collaboration and smooth CI/CD workflows.

Can keep your data up-to-date across all platforms using no-code change data capture pipelines.

Pricing

Similar to Integrate IO, Matillion pricing plans are based on credit, which depends on virtual core consumption.

Their starters pack come with a minimum of 500 credits per month and charge per credit is $2 with features like job scheduling, transformation, and validation.

Advanced version charges $2.50 for 750 credits. And enterprise version charges $2.70 for 1k credits.

Azure Data Factory

From the house of Microsoft, ADF is used mainly for data integration and pipelining purposes. It’s suitable for large-scale enterprises who are already using or familiar with Microsoft or Azure suite of data applications. Also note that Data factory is also a part of Microsoft Fabric capabilities.

Features

Contains 90+ connectors to transfer your data to on-premise, cloud, multi-cloud, SaaS, and hybrid data storage options.

Code-free and limited coding ETL & ELT pipelines.

Ability to scale up and down compute and resources based on the demand.

Suitable for large teams who maintain role-based access control.

Dashboard to monitor jobs in real-time and identify any failures.

Pricing

They have pay-as-you-go model where they charge-based on consumption. Custom pricing is available for large organizations with higher requirements.

Billed $1 per 1000 activity runs. Activity runs mean the execution or attempt of any data pipeline activity, movement, or transformation.

Check out the detailed ADF pricing breakdown here.

The above listed ETL tools are open-source and cloud-based. Let’s take a look at some of the enterprise ETL applications too.

IBM Datastage

Being an industry leader, IBM Datastage is available in both enterprise and cloud versions, with more or less similar features. The Datastage enterprise version is perfect for companies with strict regulatory requirements and manage data in on-premise storage. Anyone looking for an end-to-end data integration solution with on-premise build can go for this.

Features

Supports both ETL and ELT pipelines with more flexibility and transparency into data movements. Pre-built connectors available to all common storage sources and relational databases.

Parallel processing to handle huge volumes of data simultaneously without lags.

IBM Watson Knowledge to protect sensitive data.

Can detect failures automatically and alert the right teams.

Can manage your entire array of data and AI workloads within IBM environment.

Pricing

Contact their sales team for pricing.

Informatica

Informatica is a simplified cloud data integration and management solution meant for medium to large sized organizations who are predominantly cloud-based. It can be used for ELT and ETL pipelines as well as for change data capture, and data replication.

Features

Real-time dashboard to offer you visibility into operating pipelines and their performance.

Get recommendations from their in-built AI-based tool called CLAIRE on data ingestion from source platforms.

100+ no-code connectors present in this ETL tool.

With a well integrated interface, you could access any other data management services from Informatica as well, all in one place.

Best-suited when you scale, as the tool offers volume-based pricing benefits.

Pricing

Pay-as-you-use, flexible, and cost-effective pricing model. Contact sales for more information.

Fivetran

Fivetran is an enterprise-grade ETL platform meant for data movement, transformation, and extensibility. It’s prefered by mid to large-sized companies especially from the background of financial services, eCommerce, web services, software, etc.

Features

Connect 500+ sources with databases, cloud storage, data lakes, or streaming apps, using automated pipelines.

Out-of-the-box connectors for SaaS, database, files, and SAP replication. Cloud-based custom connectors are available too.

Transformation happens automatically the moment data lands into the destination (for ELT workloads).

Fully automated data replication process from source to replication.

Ensures data integrity on your destination with idempotent functions.

Pricing

Fivetran charges you based on MAR (monthly active rows), that is the number of rows being added or deleted or updated every month.

Comes with a free-forever plan for low volume workloads. Other than that, have starter, standard, and enterprise plans with increased features and custom costs. Contact their team for a quote.

Portable

This one is an open-source, cloud-based ETL solution. Dedicated for ETL operations, it can be used by companies of all sizes. Due to its unique range of connectors available, businesses with niche-specific applications can be benefited from Portable.

Features

1000+ connectors and the list is growing. If you need anything specific, you could inquire and the team will build it for you.

Automated ETL and ELT pipelines. You could choose depending on your business function.

Schema adjusts itself while data is being loaded into the destination, maintaining uniformity and preventing interruptions.

Encrpyts data during the transit, making it a safe and secured data ingestion & transformation.

Round-the-clock free support is available where all types of troubleshooting are addressed.

Pricing

7 days free trial available for the starter pack.

Starter plan priced $200 monthly. The next-level is Scale with $1000/month. Request their sales team for enterprise level pricing.

Conclusion

Extract, Transform, and Load is a crucial part of any data engineering workload. This takes care of the data adoption part, delivering reliable, real-time data to the end users. Hence, you should inspect more on the tool that its basic features and pricing. Sometimes, even a demo cannot be enough to predict if the tool is right for you.

The reason is well-known. Every business is different. Their data needs, applications, storage mediums are different. The frequency at which they transmit data, the number of background data professionals involved, the analytics efforts required… Also, there is ELT vs. ETL debate. What you need and what the tool can offer... The number of factors that play a role here is endless. If you feel the same and seek help from experienced data professionals, we are here to help.

We inspect your current workloads, analyze your future objectives, and suggest the right ETL tool that can bridge the both. Perhaps, you need help setting them up? Or, need a data engineer to maintain and monitor your data pipelines or set up reverse ETL workloads? We have got all of your data needs covered. Send a message below so we can get back.

Tools alone don’t solve ETL. Engineering does. Explore our ETL solutions.

by Suresh

Suresh, the data architect at datakulture, is our senior solution architect and data engineering lead, who brings over 9 years of deep expertise in designing and delivering data warehouse and engineering solutions. He is also a Certified Fabric Analytics Engineer Associate, who plays a major role in making us one of the early adopters of Fabric. He writes in words whatever he delivers with precision to his clients, consistently voicing out trends and recent happenings in the data engineering sector.